Friday 22nd, 2026 at Monash, design researchers presented artifacts from their practice—Jon McCormack’s language device with personality sliders, Bec Nally and Yaw Ofosu-Asare’s Mars-based pedagogy, Scott Mayson’s prosthetic casting system, and work on the Queer Stories installation. What struck me wasn’t the objects themselves but how they all resisted clean resolution and commodifiable outcomes.

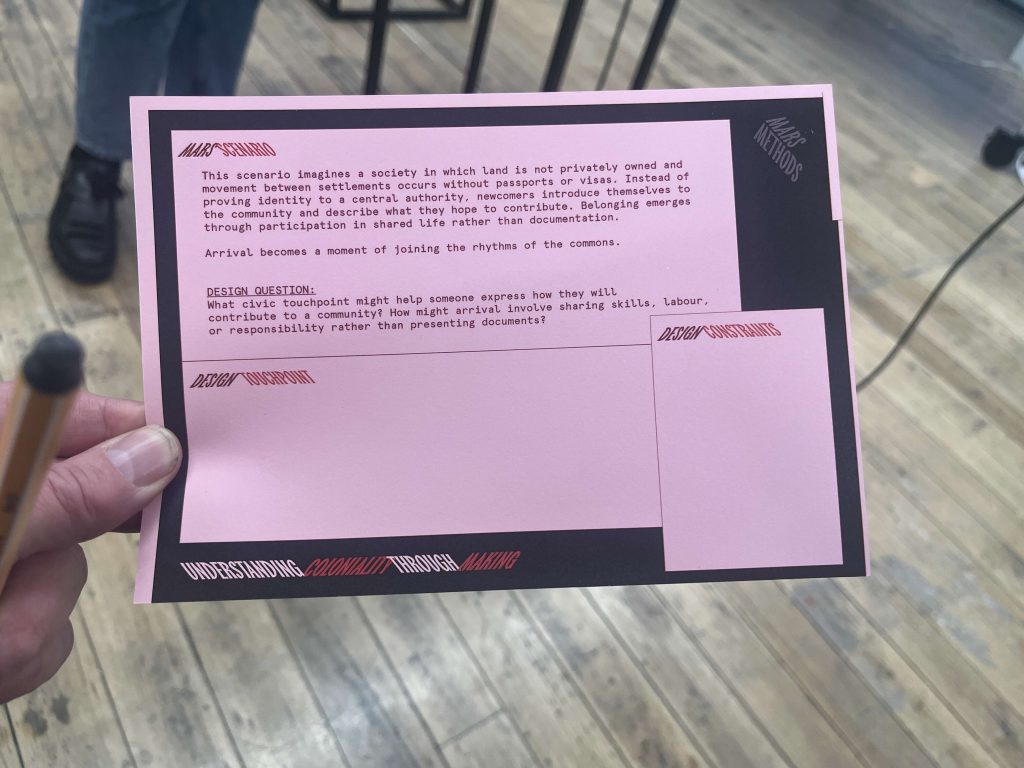

The Mars Pedagogy

Bec and Yaw described teaching decolonial design through a speculative Mars scenario. Rather than lecturing about colonialism, they invited students to navigate two contradictory governance logics simultaneously: compliance-based systems on Earth, relational-based systems on Mars. The pedagogical move was clever—abstraction through speculation. But what happened in practice was more interesting.

Early weeks were genuinely confusing. Students thought Mars was metaphor. They didn’t understand the logics they were supposed to be learning. Some said they were frustrated. The instructors could have simplified, explained more clearly, resolved the confusion. Instead, they named it. They said: this discomfort is where learning happens.

By semester’s end, students understood something about holding contradiction that lectures could never convey. They’d lived the tension between two ways of thinking simultaneously. That embodied experience, the months spent shuttling between logics, designing systems that only made sense in one framework or the other, discovering that some problems couldn’t be solved by choosing one logic over another, changed how they thought about design itself. They couldn’t unknow what they’d learned through that practice.

The Language Device

Jon McCormack presented a quiet, beguiling object: a surface where you place magnetic words, constrained to 50 words. By limiting vocabulary, the LLM was pushed into semantically unexpected territory. But the real discovery came through use. People didn’t interact with it as prescribed. They played with it collectively, explored its personality controls (optimist/pessimist, dreamer/practical, serious/playful), used it as a thinking partner rather than productivity tool.

What fascinated Jon was that people considered language as material. They held the words in their hands. They felt their weight. They moved them slowly across the surface. The e-paper display kept the last response visible, you could sit with it, return to it, unlike a chat interface that scrolls away. The constraint generated unexpected possibility. By limiting what the device could do, it became more useful for actual thinking.

The Prosthetic System

Scott Mayson described a different kind of constraint: working with technicians in Indonesia on a pressure-casting system for prosthetics. In the Global North, fitting a prosthesis takes five days, artisan work, skilled embodied knowledge. Scott’s team didn’t try to automate that away. They iterated based on local knowledge, stripped the system to essentials (Teflon tape, water pressure, body weight), made it field-repairable in conflict zones, trainable without specialized equipment.

The crucial move: they didn’t impose solutions from the Global North. They worked with technicians as collaborators, not users. The design only worked through ongoing relationship with communities. That’s not a feature to be optimised away. It’s the point.

What Connected Them

All three projects treated fragmentation, constraint, and unresolved tension not as problems to fix but as epistemology itself. They understood that genuine knowledge emerges through living with contradiction, not resolving it. They made space for what couldn’t be quantified or commodified, the embodied confusion, the slow thinking, the local adaptation, the relational work.

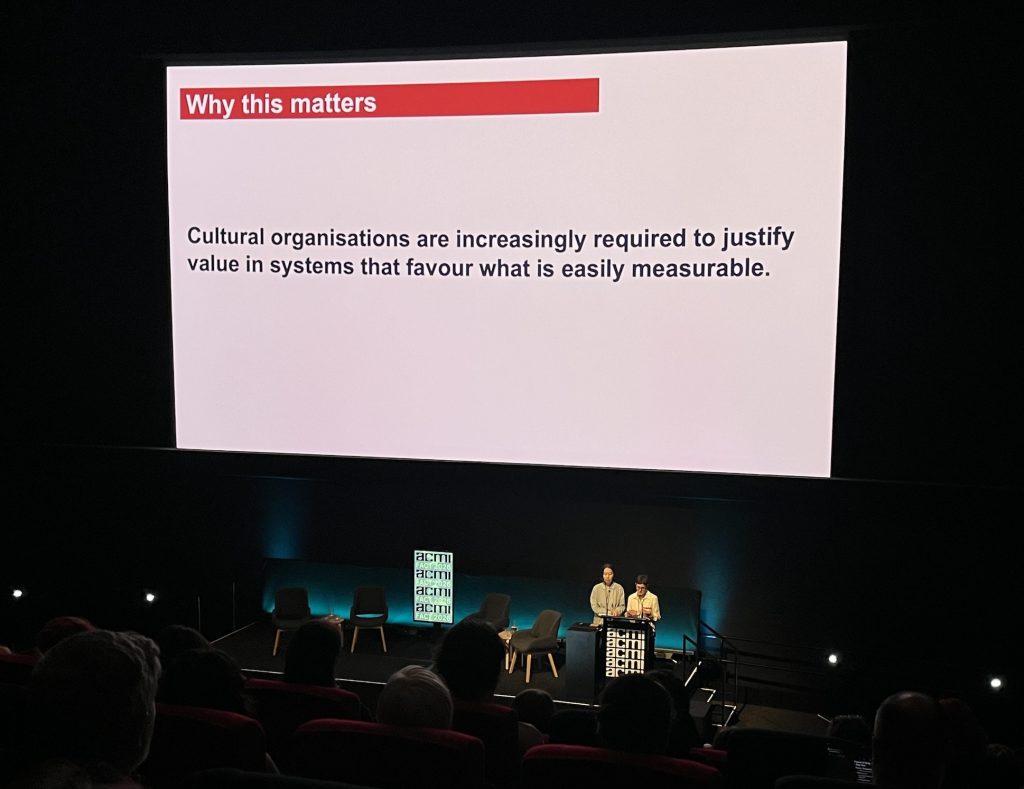

Robert’s Refusal

Days later, Robert Nugent premiered Signatures of Earth, a documentary that deliberately rejects conventional structure. Screen Australia rejected it because it doesn’t follow Grissonian guidelines. That rejection clarified his approach: he was reacting against filmmaking that demands narrative arc, character-driven jeopardy, resolved endings. The three-act structure is so embedded that its absence feels like absence.

Instead, he created something that layers vantage points: scuba diving, glass-bottom boats, drone footage positioned as “alien sentience,” archival images from space. Voices overlap without synthesis. Settler and indigenous narratives occupy the same sonic space without resolution. The film doesn’t resolve them into coherence. You sit with the fragments, the contradictions, the incomplete stories.

He was honest about the cost. He’d wanted collaborators, editors, sound designers—but couldn’t afford them. So he cut the film himself, designed sound himself, sat alone with material for months. There’s loneliness embedded in the work. The fragmentation and refusal to provide easy answers came at personal price.

The Connection

Both events were staging resistance to the same demand: for commodifiable, clearly-communicated, resolvable knowledge. Both rejected clean narrative. Both embraced fragmentation. Both understood constraint as generative.

But they approached the cost differently. Robert experienced loneliness in making. The design educators built collaboration, circle way, sitting together, naming discomfort collectively. Yet both understood that genuine learning requires sitting in tension rather than resolving it.

What struck me: the form is the argument. Robert doesn’t describe non-narrative cinema—you experience it and your understanding of what cinema can do shifts. Bec and Yaw don’t explain decolonial thinking, you inhabit the contradiction. Jon and Scott don’t claim their artifacts are finished solutions—they show them as living, iterative, dependent on ongoing relationship.

Knowledge created through genuine constraint, real collaboration, and honest fragmentation might communicate more truthfully than anything optimized for clarity and consumption.

Both events demonstrated: the form is the message.